O brave new world,

That has such people in ’t!

—The Tempest

When I first wrote about ChatGPT three years ago, concerns about AI in the classroom were just beginning to emerge. Much handwringing was done over fears of rampant cheating—especially in the text-heavy disciplines such as English and history—and anxiety among educators steadily mounted that AI tutors might soon be coming for people’s jobs. There was an almost universal apprehension that the digital age’s ultimate disruptor to the education had perhaps finally arrived. Lots of angst.

Which now seems positively quaint.

Because today, we have computers grading computers; brain scans showing AI inhibiting neurons; and thought leaders coining a new term, “anti-intelligence,” to describe what is happening to our youngest minds (more on this later). Enormous data centers are sprouting like weeds—with the same corresponding economic costs and environmental harm as their literal botanical counterparts—and for over a year now, I have received at least two offers a day on Linked-In to earn hourly income training artificial intelligences that have a biology focus.

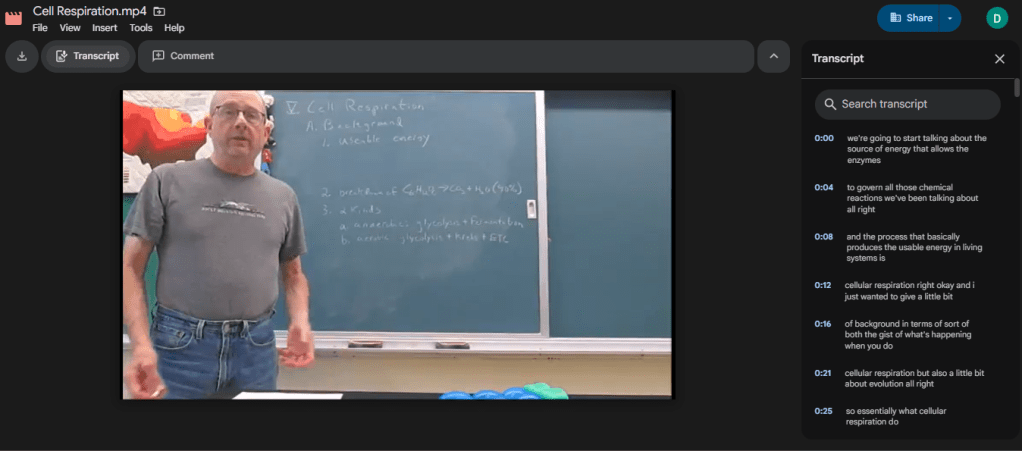

Yet the real eye-opening/face-slapping/jaw-dropping/pick-your-cliché moment for me recently was when I discovered that all my video lectures for my senior electives now open on-line with a searchable 100% AI-generated transcript of what I am saying that I neither created nor gave any permission to create. Google’s AI simply spontaneously takes my entire audio and creates the corresponding text on the screen to the right of the visual component—all in the few seconds it takes to start the usually 35-40 minute video. Here’s a screenshot for any skeptic:

Now, I would hope that the implications of what Google is now doing spontaneously would invoke at least a quiver of discomfort (if not outright abject terror!). But if not, then my reader is probably itself an LLM AI to begin with, scouring the internet for its own training purposes, no emotional response required. My writing has simply made it more proficient at invading what little remains of my already barely existent privacy.

However, what disturbed me most when discovering Google AI’s latest feature was not the act itself; it was the reality that here was one less opportunity for my students to have to think for themselves. As English teacher Thomas David Moore sums it:

There is nothing new about students trying to get one over on their teachers — there are probably cuneiform tablets about it — but when students use AI to generate what Shannon Vallor, philosopher of technology at the University of Edinburgh, calls a “truth-shaped word collage,” they are not only gaslighting the people trying to teach them, they are gaslighting themselves. In the words of Tulane professor Stan Oklobdzija, asking a computer to write an essay for you is the equivalent of “going to the gym and having robots lift the weights for you.”

And without opportunities for cognitive heavy-lifting, brains atrophy; minds devolve; and the entire point of education becomes at risk.

But that brings me back to what I mentioned earlier, the notion of “anti-intelligence.” As its originator, John Nosta, describes it:

Anti-intelligence is not stupidity or some sort of cognitive failure. It’s the performance of knowing without understanding. It’s language severed from memory, context, and even intention. It’s what large language models (LLMs) do so well. They produce coherent outputs through pattern-matching rather than comprehension. Where human cognition builds meaning through the struggle of thought, anti-intelligence arrives fully formed.

Thus, for example, when Google automatically transcribes my lectures, my students do not have to wrestle with grasping the cognitive story I am asking them to learn by watching and engaging with the video; they can simply look up the factoid they need for a particular question, without any concern for the larger intellectual context within which that question resides. In other words, they no longer need to learn anything from my lectures; they just need them as employable databases.

Which is fine, I freely acknowledge, if you already know how to think. I do not need to possess all human knowledge in my brain because I possess the critical thinking skills honed by decades of training that enable me to effectively employ those databases containing that knowledge for constructive cognitive purposes. Where things become problematic is that anti-intelligence has become the “cognitive climate” where the minds of today’s youngest children develop, and “when AI answers arrive instantly from childhood, it may affect whether certain cognitive capacities develop.” Every theory of brain development is clear: children learn through a series of encounters with constraints that carry costs when mistakes are made. Without both those costs and those constraints, they will fail to generate both the necessary knowledge and the intellectual capacity to make steadily more informed decisions.

Yet today’s children, as Nosta points out, “aren’t just using artificial intelligence (AI) as a study aid; they’re building their cognitive patterns in an environment where answers arrive before questions even fully form.” We have never lived in such a world, and that’s what makes the potential future of AI in education so troubling: the pathway the brain needs to follow during childhood “doesn’t just make thinking harder; it makes thinking possible.” If we remove that path, do we remove thinking?

It’s a disturbing (if not distressing) thought; especially given that 61% of Americans can’t name the 3 branches of government, half our adults can’t read a book written at the 8th grade level, and—my personal favorite—25% of us apparently still think the sun revolves around the earth rather than the other way around! Add in the fact that nearly half of college graduates report never reading another book of any kind following graduation and that significant majorities of today’s youth report either being bored or otherwise disengaged at school and the notion that AI could interfere even further with this current situation is positively disheartening. We are already a society where “the rejection of learned knowledge is often seen as an expression of personal liberty” and “hostility to education is now actively separating us from a shared reality” (Millet, p. 148). If AI’s increasing ubiquity inhibits our collective cognitive capacity beyond the damage digital technologies and underfunding have already done to our educational systems, then we really are “sitting ducks for tyrants and profiteers, willing to believe whatever tales they choose to tell us” (Millet, p. 149).

Lest we “abandon all hope,” though, I need to point out that steadily increasing numbers of us in education—at all levels—have begun adapting to this new reality—as we always have even since those first aforementioned cuneiform days (it was hard to cheat in the strictly oral culture preceding them). High schools and colleges alike report returning to Bluebooks for exams and in-class writing for essays. Hand-written lab notebooks are making a comeback in the sciences, and at least two universities, Purdue and Ohio State, have now made proficiency with AI in one’s matriculating discipline a graduation requirement because A) there is the practical need for individuals in general to be able to distinguish truth from fiction and because B) you won’t be able to do your job in the future without such knowledge. As one microbiologist put it:

AI has already “revolutionized” her field. Recent research suggests that AI-enabled analysis of large genomic data sets, for instance, is allowing scientists to look at DNA directly from environmental samples, revealing entire ecosystems of previously unknown microbes.

In other words, there are questions of value in need of answers that the human brain does not have the computing power to solve but which our brain does have the critical thinking to put to meaningful purpose. AI can do things we can’t; we just need to stop surrendering to it the things we can do that it can’t.

The challenge, therefore, is to determine where AI has value in educational situations and where active resistance to it needs to take place. For instance, if we know a climate of anti-intelligence threatens proper brain development, then we need to pay careful attention to how we construct pre-primary and early-childhood educational environments and experiences, and we need to teach parents not to park their toddler(s) in front of an I-pad, no matter how exhausted and tired the work-day may have left them. Knowing that screen time inhibits neural activity, we need to plan lessons that don’t require extensive use of computers, and we either collect cellphones at the start of the school day (as so many K-12 institutions are finally doing) or ban them from being out in the classroom (as so many colleges and universities now do).

At the same time, where an AI program can enhance educational investigation in ways no human brain can ever accomplish, then designing lessons to actively employ it adds value to the learning. For example, if I want my students to explore the actual attitudes of Americans about gun control, I can have them see how many times any type of restriction has been proposed by every level of legislature in the land. Or if I want them to have a better understanding of a pastiche before making them hand-write their own, I can have them generate such a thing from an entire body of an author’s work. Indeed, in my discipline, the sciences, where genuinely enormous databases are the rule rather than the exception, the potential uses of AI to enhance student learning are almost too numerous to list here. The bottom line is that there are lots of potential positive possibilities for education’s frenemy in the classroom; they just require wise discernment on the part of the teacher.

But that is perhaps the greatest challenge for dinosaurs such as me when it comes to AI and teaching because I have zero interest in artificial intelligence. Period. In fact, I would go so far as to say I have negative interest; I’m actively antithetical to it even. The simple truth is that I relish difficult, hard thinking. I enjoy the excitement from the intellectual uncertainty of being “lost” and finding my way “home.” To state the obvious, I treasure the blank page and what it is going to demand of me to fill it. I am “the life of the mind.” Thus, learning that Google now spontaneously generates transcripts of my video lectures simply fills me with annoyance since I will now have to reconfigure how I have my students employ them in their learning. I know I must adapt as an educator to this changing environment as I have so many times before, and I know that I will do so. But after 37 years of adapting, I’m starting to appreciate my grandfather’s attitude when VCRs arrived on the scene (and this from a man who was born before airplanes and lived to see the space shuttles): nope; done; don’t want to deal with this.

Maybe I can find an AI that can help.

References

Millet, L. (2024) We Loved It All: A Memory of Life. New York: W. W. Norton & Company.

Moore, T. (Sept. 8, 2025) Jelly Beans for Grapes: How AI Can Erode Students’ Creativity. EdSurge. https://www.edsurge.com/news/2025-09-08-jelly-beans-for-grapes-how-ai-can-erode-students-creativity.

Nosta, J. (Jan. 22, 2026) Growing Up Anti-Intelligent. Psychology Today. https://www.psychologytoday.com/ca/blog/the-digital-self/202601/growing-up-anti-intelligent.

Toppo, G. (Feb. 17, 2026) At These Universities, Using AI Isn’t Shunned–It’s a Graduation Requirement. The 74. https://www.the74million.org/article/at-these-universities-using-ai-isnt-shunned-its-a-graduation-requirement/.